Fun with Decision Trees using R and SQL Server

As those who have been reading this blog know, I’ve recently been spending a lot of time doing statistical analysis with R and SQL Server and have really been enjoying digging in to the various bits of functionality that is offered.

One thing I’ve learned over the years is that I am a very “classification-oriented” individual when it comes to working with data. I work best with data sets that I understand and that I’ve been able to sort into the various buckets that make sense to me based on what I’m trying to accomplish with the data.

The problem with this need for nice and tidy data classification is that it doesn’t work well when you don’t really have a complete understanding of the data set you’re working with. This especially becomes an issue if you’re trying to mine the data you have in order to predict future outcomes based on past performance (a requirement that is becoming more and more importing to “Data Scientists” as more and more organizations make that shift to true data-driven processes.

Understanding Data

If you read my previous post on creating a large SQL Server database, you’ve seen some of the data that I am playing around with. Obviously there is a lot of interesting information locked within the mortgage loan application data stored in the HMDA database. One specific use-case with this data might be to look at some of the deciding factors related to home loans being granted and create some predictive models based on the data. For example, I might want to predict the outcome of a home loan application process based on factors like the purpose of the loan, the income of the applicant, and some other factors such as race. This could be very useful for a mortgage company to look at and see who to target for an ad campaign, or maybe to research things such as do race or sex have any correlation to the amount of a home loan given. In order to answer this use case, we need to have a good understanding of the data we’re working with and see what it looks like when shaped into a “Decision Tree” (for more information on what exactly a decision tree is, take a look at: http://en.wikipedia.org/wiki/Decision_tree )

Creating a Data Set to Work With

If we wanted to use the home mortgage disclosure data to look at past performance of home loans, the first thing we need to do is create a manageable data set to use to create a model. Since I live in El Paso County, Colorado, I’ll create a table of data that just details information on Home Loans in this area. Given the database that was created earlier (see previous post) We can create a subset of the data with the following query:

SELECT

loan_purpose AS [purpose]

,loan_amount AS [amount]

,CASE WHEN applicant_race = 'White' THEN applicant_race ELSE 'Non-White' END AS [race]

,applicant_sex AS [sex]

,applicant_income AS [income]

,CASE WHEN denial_reason = 'Approved' THEN denial_reason ELSE 'Denied' END AS [status]

INTO

tblElPasoCountyLoanStatus

FROM

vColoradoLoans

WHERE county='El Paso County'

What this will do is create a simple table that has 6 columns. I’ve simplified the data slightly so that some of the factors (race, denial reason) are binary values as opposed to continuous. While this is not a necessary transformation for the most part, it helps simplify the output for the purposes of this discussion.

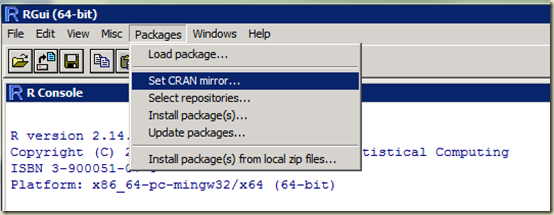

Loading the Appropriate Packages and Libraries in R

Once I’ve created the table above in SQL Server, I can load the data into R and begin analysis. Before we get too deep in the creation of the decision tree, I should mention that I am going to use a package called “rpart” as well as a package called “rpart.plot”. If you are really curious and would like to know the science behind the rpart package, there is a very dry document here that explains the algorithm in detail: http://www.mayo.edu/hsr/techrpt/61.pdf

The rpart package can be found here: http://cran.stat.ucla.edu/web/packages/rpart/index.html

and rpart.plot can be found here: http://cran.stat.ucla.edu/web/packages/rpart.plot/index.html

Of course you really don’t need to know where exactly the packages are, you can install them with the R command as follows:

When you execute the above command in the R console, you will be prompted for the mirror site you wish to use, and the package will be downloaded, unpacked and installed. Once the packages are installed, you can load them into your R environment as follows:

Connecting to SQL Server Data

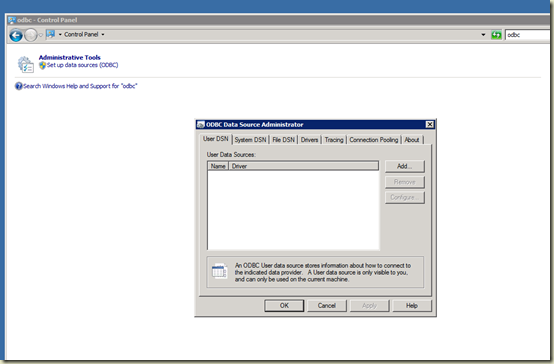

Once the libraries are loaded, we need to obtain the data we’re going to work with. If you haven’t worked with SQL Server data in R before, you might want to read my previous post on connecting R to SQL Server via the ODBC library. First we need to setup an ODBC channel to connect to the table we created above. Is is done via the following command:

(Remember that “HMDAData” is the name of the ODBC DSN I created to connect to my database)

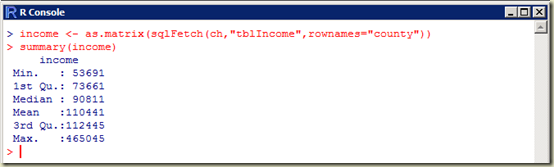

Once the channel is created, I can load the data from the table via the sqlFetch command in R as follows:

Examining the Data

This loads the data from the table into the R variable “loanstatus”. You can view a summary of the loanstatus as follows:

Already you can see that we’ve extracted some good information out of the database. (as a side note here, I think this is VERY COOL! Think of all the SQL Queries I’d have to run to get this same information from the table I created earlier)

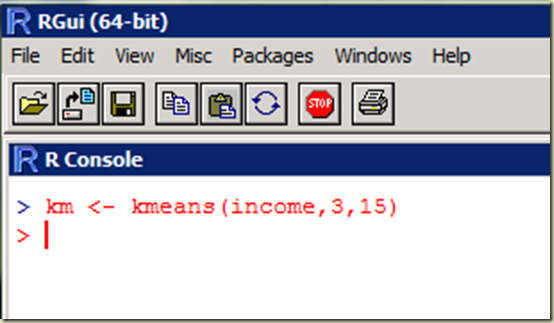

Now that we have the data in an R variable, we can start to create a decision tree. Since we ultimately want to use this data to predict the amount of a loan based on certain factors such as race, sex and income, we’ll create a regression tree shaped to those parameters. We will use the rpart function in order to create the tree. rpart is a very simplistic function that accepts the following parameters:

- formula – The formula is specified in the following format: outcome ~ predictor1 + predictor2 + predictor3 etc.

- data – The specific data frame to use

- method – “class” for a classification tree or “anova” for a regression tree

Remember that within R, you can always type ? <function> to get a full description of a particular function.

In our case, the rpart command would look like this:

Once we’ve created the tree variable (this can be named anything, I just kept it simple and named it “tree” here) we can look at a summary and determine what it looks like:

(there are a total of 13 nodes in my specific example, and I can’t paste the entire tree here in text form)

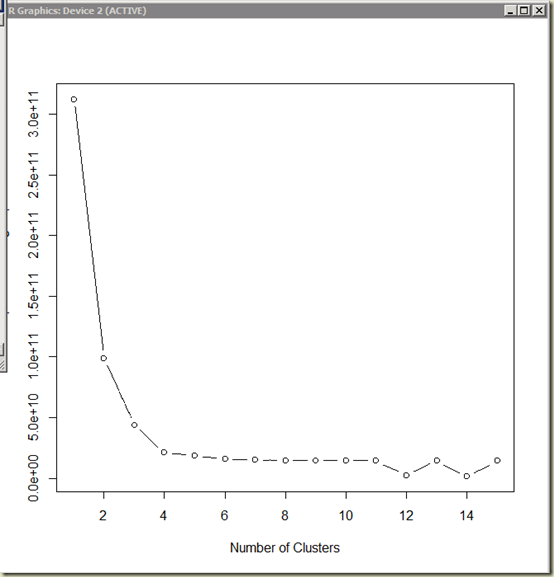

One big factor in determining how useful a particular decision tree going to be is to examine the “complexity parameter” (“cp” for short) for each of the nodes. The CP is used to cross-validate the data and make sure that the tree is pruned to remove the portions of the tree that are “over fitted” (You can read about the terminology here: http://www.statmethods.net/advstats/cart.html ) . Since I am a very visual person, I like to see this cross-validation data in chart form as follows:

Which generates a nice graph showing me the relative size of the tree, the cross-validated error that is generated, and the resulting cp:

Since the tree that I am working with is relatively small, I am not going to worry about pruning it here and removing the “bad” cp values. To generate the decision tree, use the following command:

(Remember that you can use the ? command to get a full listing of all options for a given function. In this case, by using type=4 I am instructing R to generate a plot containing all nodes and all information, and by using extra=1 I am instructing R to include the number of nodes in each branch)

With this visual of the tree, I can see that income seems to be a deciding factor, and it splits at approximately 90,000. Following the tree to the left, for those with less than 90K income, we see a split for Home Improvement loans versus Home Purchase and refinance. For the purchase and refinance, we see another split at approximately 52K income. Back on the right side of the tree we see a split at approximately 208K income, with the same split for home improvement loans versus purchase and refinance.

Conclusion

Being the data geek that I am, I could continually refine this model and graph it to start finding the patterns and determining just exactly how the data is shaped. At that point I could feed additional data into the model and use it to predict outcomes based on past performance. There are many things that can be done with Decision Trees and I’ve only scratched the surface here with this post. I’ll be writing many more of these posts in the future as I continue to explore the magic of data mining with R.